Icelandair Group is one of Iceland’s largest airline corporations. The company includes Icelandair (a global airline), logistics, and several related business units. Traditionally, Icelandair Group acquired IT infrastructure capacity upfront and consumed it over time. Running out of IT capacity has serious business impacts, so a cushion was built into capacity planning analysis.

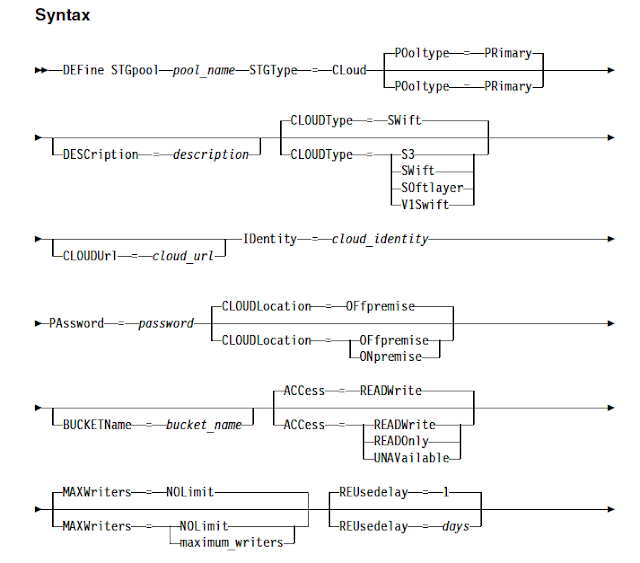

Icelandair Group recently made the strategic decision to migrate key IT workloads such as applications & backups to Amazon Web Services (AWS), after determining that doing so would move essential services closer to their customers around the world, enhance business resiliency, and enable rapid scalability.

Migrating backups to AWS S3 with the help of IBM spectrum protect V8 has given a huge performance improvements over deployment, backups and restore timings.

The technical team found that AWS makes it easy to store data in multiple AWS Regions, and copy data between Regions for Disaster Recovery testing. They designed a series of progressively complex tests for AWS and IBM Spectrum Protect. The results of their tests are

- IBM TSM V8 server deployment time reduced from 72 hours to 10 minutes.

- By using TSM for VE for their VM backups, they found that VMware and server administrators can manage cloud backups and restores confidently, without reliance on backup software experts. Backup and restore operations are built into VMware vSphere Web Client. VMware administrators use a familiar interface without necessarily needing to learn new software. Restores can be initiated faster, and problems can be noticed sooner.

- Reliability and performance requirements were met or exceeded. Backup throughput easily scaled up, until the network connection was saturated, indicating that both AWS and IBM Spectrum Protect are performance optimized.

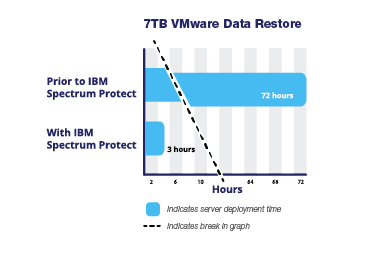

- The time required to restore 7 terabytes of VMware data was reduced from 72 hours to 3 hours. Performance improvements were due to multiple changes in IBM Spectrum Protect, including multithreaded restores, compression, and deduplication.